An AI co-pilot to Monitor Data Trustability Data Observability and more- Let AI do the heavy lifting for you

Deploy Data Quality tools/Data Trust Monitors Across Pipelines to Reduce Dark Data

Seth Rao's exclusive interview with CDO Magazine

Autonomous Data Monitoring for Cloud and Lake

Data Warehouses, Lakes and Clouds are notoriously error prone. The process of validating their Data Quality (DQ) is laborious manual work and is far from satisfactory. Unfortunately, >95% of your data is dark data – unmonitored, unvalidated and unreliable. Every step it moves downstream, errors get exponentially compounded. It takes 10x cost to fix it, if you can detect it all. What you don’t detect is unmitigated business risk.

What Is DataBuck?

Data errors due to “Systems Risks” are the biggest contributors to untrustworthy data. As ETL jobs move data around, errors creep in and steadily multiply like cancer across an enterprise. For every step the error spreads, it takes 10x more cost and effort to fix it.

DataBuck is an autonomous data quality validation s/w. It automatically detects 100% of all Systems Risks with minimal human intervention using AI/ML. It automatically sets 1,000s of validation checks and their thresholds. It is >10x faster than any other tool or your own custom scripts. AI/ML enables the tool to be set up and validate entire databases or schemas in just a few clicks.

Customer’s report benefits of:

(i) Higher trust in reports, analytics & models

(ii) Lower data maintenance work & cost

(iii) 10x efficiency in scaling Data Quality ops

When is DataBuck powerful?

Current data monitoring and validation tools and processes fare very poorly under these conditions listed below, and DataBuck is perfect for these:

- Cloud/Lake use

- Dynamic data, for example, operational and transactional data

- The high volume of data

- New sources or changing structures of data

- Data is being used for purposes it was not intended for when it was collected. (New uses for data)

People productivity

boost >80%

Reduction in unexpected errors: 70%

Cost reduction >50%

Time reduction to onboard data set ~90%

Increase in processing speed >10x

Cloud native

DataBuck's comprehensive set of 4 data validation capabilities

Module 1

Observability

DataBuck’s unified Observability platform:

Powered by Machine Learning, DataBuck is an advanced Observability tool that prevents critical data issues before it breaks the data pipeline or reaches the data users.

Module 2

Trustability

Measure and monitor the trustworthiness and usability of data on prem, on the cloud, and across the entire data pipeline with DataBuck's Trustability Module.

Module 3

Data Quality

Autonomous Data Quality Validation:

DataBuck is an automated data monitoring and validation software that autonomously validates 1,000’s of data sets in a few clicks, with lower data maintenance work & costs.

Module 4

Data Matching

Cross Platform Data Matching with ML:

Reconcile complex data across multiple platforms automatically using no-code Machine Learning to detect errors before they infect multiple systems downstream.

In The News Popular publications features FirstEigen

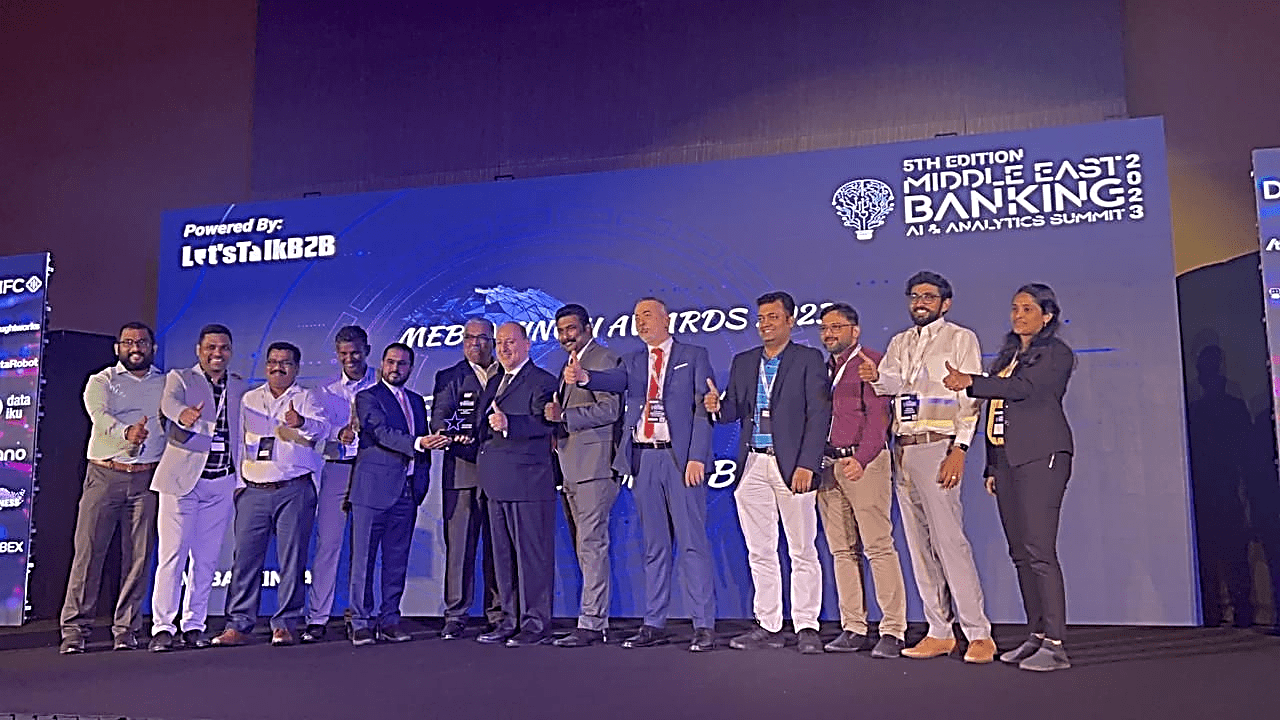

DataBuck customer, Roland Doummar, SVP, Head of Data Gov. First Abu Dhabi Bank, and his team were awarded the Best Use Case of Data Management for 2023 at the Middle East banking AI & Analytics summit #MEBANKINGAI.

Published in Entrepreneur.com

Strategies for Achieving Data Quality in the Cloud

With more and more applications moving to the cloud, the quality of data is becoming a growing concern. Erroneous data can cause all sorts of problems for businesses.

Published in Dataversity

How to Leverage Machine Learning to Identify Data Errors in a Data Lake

A data lake becomes a data swamp in the absence of comprehensive data quality validation and does not offer a clear link to value creation.

Simplify Data Quality Monitoring and Data Quality Validation with DataBuck

Machine Learning-Guided Cloud, Lake Data Monitoring and Validation Tool

DataBuck can validate your Big Data autonomously and at 10x the speed of any other data validation tool or your own custom scripts. Clean data in 3 clicks with no coding.