Data quality testing guards your business against low-quality data. If the quality of your company’s information assets is impacting revenue, it’s time to consider a solution.

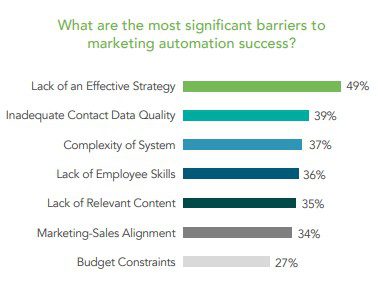

Companies from every industry rely on accurate data for analysis and data-driven insights. Without this information, businesses struggle to remain competitive, productive, and profitable within their market. For example, in a survey conducted by Dun & Bradstreet, 39% of marketers stated inadequate contact data quality was the most significant barrier to effective marketing automation.

Source: Dun & Bradstreet – Optimize Your Marketing Automation (dnb.com)

Marketers use contact data to develop marketing campaigns that acquire and retain customers, the lifeblood of nearly every organization. This untrustworthy data chokes out would-be effective marketing strategies.

If your company is looking to eliminate bad data sets, you need this A-to-Z guide to testing your data quality. That way, you can find an effective solution for your unreliable data.

Key Takeaways:

- Achieving data consistency and reliability requires organizations to continually monitor their information assets based on the seven pillars of data quality.

- Assessing accuracy is the first step in managing data sets to allow for appropriate data-driven decisions based on analytics to develop valuable insights.

- You will need to conduct tests to build a baseline for identifying gaps within your data assets to improve data quality.

- Check consistency and determine data-entry configuration by trying solutions that allow you to evaluate the effectiveness of data quality testing.

Why You Should be Testing Data Quality

More than 95% of a company’s data is known as “dark data.” This information is unmonitored, unvalidated, and unreliable.

As the data moves downstream within your organization, it compounds exponentially. The longer this dark data remains unchecked, the higher the costs to fix it.

If you want to obtain consistent and reliable datasets, testing is necessary. So is finding data quality software to help validate your organization’s Big Data autonomously.

The seven pillars of data quality are:

- Consistency: Whether stored data in one location matches relevant information stored elsewhere.

- Accuracy: If a piece of data reflects the reality of the evaluation.

- Completeness: The condition of the information fulfills the expectations regarding its comprehensiveness.

- Validity: The data format, style, and type follow a specific set of criteria and/or best practices.

- Uniqueness: Detailed data records appear only once within the database.

- Timeliness: Whether the information is available at the time in which you need to access it.

- Integrity: Organizations can join differing data sets accurately to reflect a larger picture. There is a well-defined relationship between the information.

You can achieve these quality testing pillars using metrics with quantifiable results. Not only will you discover accurate and consistent data, but ambiguous data interpretations will evolve into precise information analysis.

A-to-Z Guide to Data Validation and Quality Testing

Companies collect data through various channels. They maintain this information across different databases. You can proactively ensure this information remains up to date.

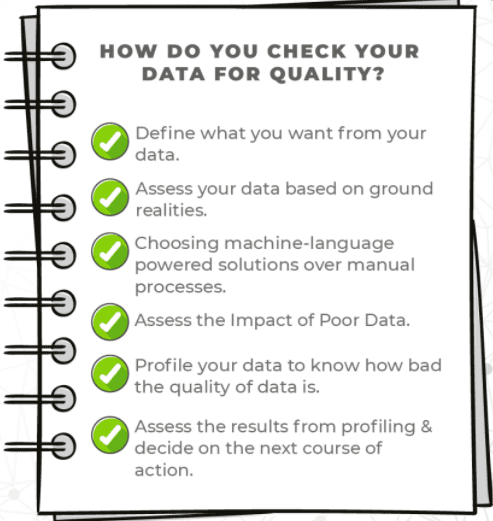

That way, your organization uses high-quality data for making agile business decisions. All you need to do is test your data within these five parameters:

1. Assess Accuracy

Metrics are necessary for testing specific data sets to understand what information you are targeting so you can make improvements. It requires knowledge of how your company uses certain information. What problems would higher quality data solve? Lead data is often inaccurate, incorrect, or repeated. What can be done to improve these inaccuracies in lead data? And are transaction, inventory, and payroll inaccuracies causing issues?

Ways to measure data accuracy include assessing the following metrics:

- Amount of returned mail

- Personalized offers accepted

- Number of complete contact information

- Errors in transactions

- Inventory discrepancies

- Payroll issues

The list goes on depending on your industry. You can start by checking this information and move on to more complex data sets. However, doing this manually is time-consuming. Consider integrating AI-powered automated data quality resources. Integrating AI-powered tools boosts productivity by at least 50%.

2. Build a Baseline

Pushing for data quality improvement company-wide is not possible unless you build a baseline to identify data gaps. It’s an effective way to flush out data errors.

For example, if you are in the shipping and logistics sector, you will want a retroactive approach to managing returned packages. Tools are available to assist you in validating addresses before outbound packages ship from your facility.

Similarly, email marketers can validate the data quality of email addresses before they launch a campaign. It can also enhance their customer engagement metrics.

3. Check Consistency

The next step is to check for consistency in the data. Regardless of where you look within your database, you shouldn’t find contradictions.

For example, if you manage an eCommerce business, your payment system and your CRM system should show that a customer made the same number of transactions.

If one shows that the customer purchased from your company five times, but the other indicates only four, then your metrics should show these inconsistencies. That way, you can fix the issue.

4. Determine Data-Entry Configuration

Once you have established what areas you need to fix within your data sets, you must determine a data-entry configuration that supports data quality. Batch verification can fix existing problems, while point of capture requires real-time validation.

5. Evaluate Effectiveness

The effectiveness of information is why you test data quality. Poor data costs businesses roughly 20% in revenue. Once you run tests on your data, check the efficacy of that test.

Is there still duplicate data? Do blaring errors (or even subtle ones) continue to spread company-wide? Have you seen a positive improvement in these areas?

Assessing these results will help you identify issues for continued refinement. It will also assist you in discovering advancements in your data quality.

How FirstEigen Can Help Your Organization with Data Quality Testing

At FirstEigen, we want to help empower businesses to better understand their data assets. DataBuck—our most effective data quality tool— helps transform company data to ensure accuracy, consistency, and integrity. This tool allows you to reduce data errors and avoid unnecessary costs associated with Bad Data.

Obtain insights into your organization’s data quality. Contact us today to schedule a demo to see what we can do to help you maintain accurate data.

Check out these articles on Data Trustability, Observability, and Data Quality.

- 6 Key Data Quality Metrics You Should Be Tracking (https://firsteigen.com/blog/6-key-data-quality-metrics-you-should-be-tracking/)

- How to Scale Your Data Quality Operations with AI and ML (https://firsteigen.com/blog/how-to-scale-your-data-quality-operations-with-ai-and-ml/)

- 12 Things You Can Do to Improve Data Quality (https://firsteigen.com/blog/12-things-you-can-do-to-improve-data-quality/)

- How to Ensure Data Integrity During Cloud Migrations (https://firsteigen.com/blog/how-to-ensure-data-integrity-during-cloud-migrations/)