Seth Rao

CEO at FirstEigen

Building Trust in Data: The Janitorial Work Critical for Business Success

Arizona State University

Data is the lifeblood of any organization, and ensuring that it is accurate and trustworthy is essential to success. Yet, data engineering is often seen as a dull and unglamorous task. In reality, though, it is a critical function that keeps operations running smoothly. This blog post will explore why building trust in data is so important and offer some tips on how to do it effectively.

What Does “Trust in Data” Mean?

Trust when it comes to data is an absolutely essential concept, as it determines how we store, process, and use the data. Financial data, retail data, operational data, and transaction data can shape decisions that have an enormous financial impact. It is important to ensure that all of these kinds of data are accurate and no unexpected errors or interpretation mistakes cause disruption or significant losses. The consequences of not having trust in data can be dire; poor decision-making due to inaccurate or unreliable information, and increased time spent correcting inaccurate records. All this puts a strain on resources which can have a significant knock-on effect felt across any business – especially larger ones with more complex and varied datasets. Lack of trust in data is an existential threat to businesses.

How to Ensure Trustworthiness of Data? (Hint: It’s Not Just the Janitors’ Job)

Automate data validation processes: It’s a great way to ensure that all data is trustworthy. Automation takes the manual rule-writing process out of the equation—it’s faster and more consistent. Instead of someone manually writing and verifying rules, algorithms, policies, or specific criteria for all data before it can be trusted, automated solutions can validate trust in data every step of the flow. However, automating this process itself is not enough to make sure data is trustworthy; making sure reports align with corporate goals, defining a standard set of metrics and properties per report/table needed to ensure data accuracy are also vital steps. No one person can take on this responsibility – it requires collaboration from within an organization including stakeholders from many different departments and roles. Ultimately everyone plays their own role in making sure that each step of the way returns reliable and accurate results.

The Business Benefits of Trustworthy Data

Trust in data is the basis for an organization’s success and security. When trusted data is used, organizations can expect a reliable source of information from which to draw conclusions that will ultimately lead to improved performance of processes like anti-money laundering (AML) monitoring and regulatory reporting. This has a huge impact on banks ensuring compliance with regulatory policies, as well as saving pharma companies millions by submitting trusted data to the FDA and retailers being able to depend on the accuracy of predictive analytics when managing their supply chain. It’s important that individuals working in these organizations trust the machine-generated data they are using in order to ensure accuracy and reliability of their decisions.

Getting Started With Data Trust

Establishing trust in data is not just a onetime activity at the end or beginning. It has to be done continuously throughout the entire data pipeline. Automating trustability reports can help flag any inaccuracies or issues with the data and will increase trust in the results. You can also leverage machine learning techniques to intelligently monitor your data sets and trustworthiness over time. Regular checks, and tightening of protocols are key steps to ensuring trustworthiness in your data sets and successfully getting started with building trust.

Trust is essential when it comes to data – without trust, data is virtually worthless. The lack of trust in data can lead to negative consequences for organizations and individuals alike, but fortunately there are ways to increase trust in data. One way to do this is through DataBuck, an autonomous Data Trustability platform that generates objective Data Trust Scores using no-code ML. Implementing DataBuck in your organization can reduce data rework efforts by 90% and improve analytical model accuracy by 70%. If you’re interested in getting started with increasing trust in your data, contact us today for a free trial . We can help you to get started and on your way towards data trustworthiness.

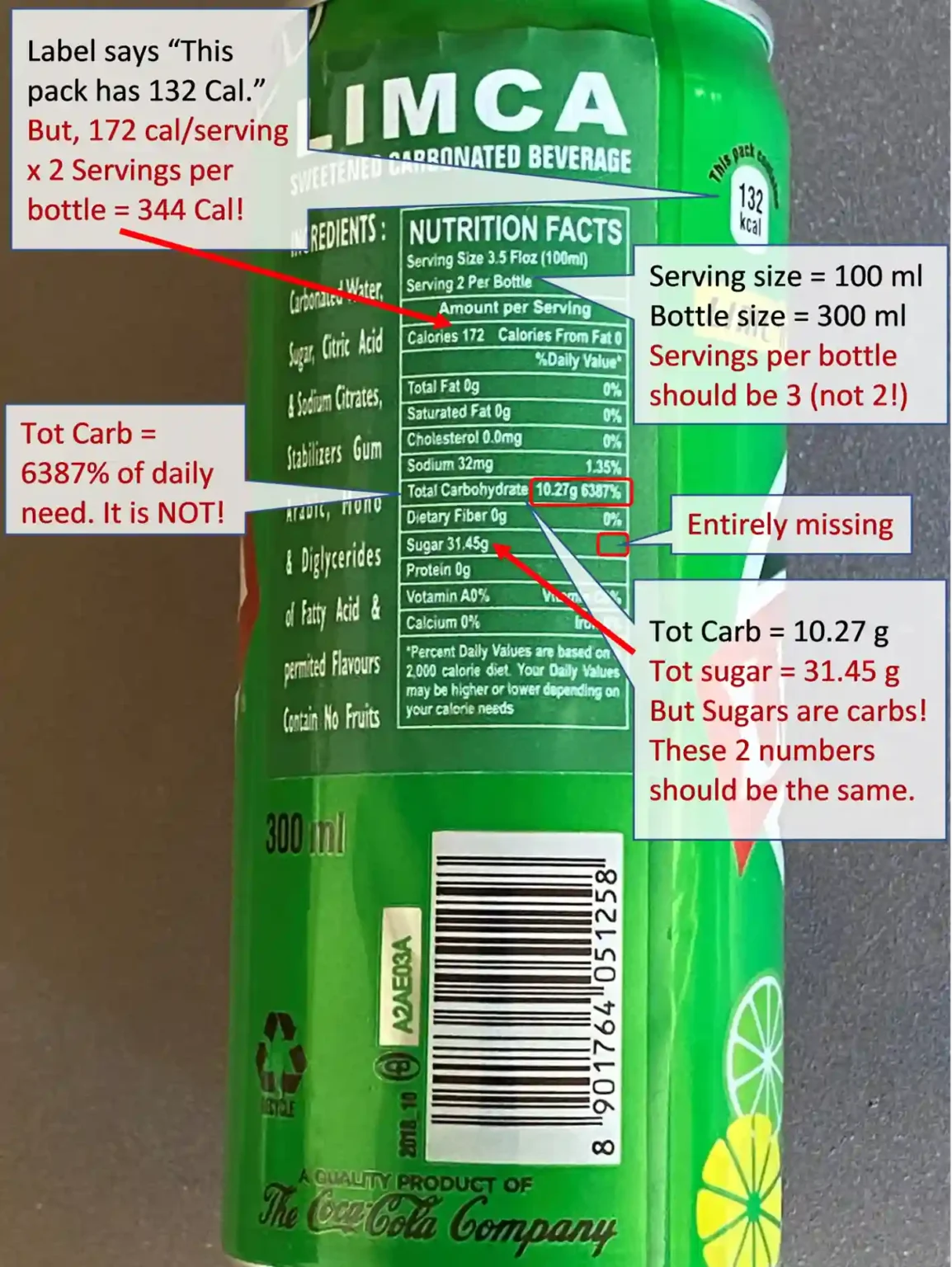

Case Study: Would You Trust This Product Label?

Limca is a product of Coca Cola. This can was bought in Chicago. How many data errors can you find on this label? How many errors will you find acceptable?

- Serving size = 100 ml, package size = 300 ml; Servings per bottle should be 3, but the label lists it as 2.

- Label says “This pack has 132 K Cal.” But the details say it has 172 Cal/serving.

- The label also says the container has 2 Servings per package, so the package should have 344 K Cal not 132 K Cal. Numbers are inconsistent even within the label.

- Tot Carb = 10.27 gm, and total sugar = 31.45 gm. But Sugars are carbs! These 2 numbers should be the same.

- Tot Carb = 6387% of daily need. It is NOT!

- 31 gm of sugar per serving (100 ml). This package of 300 ml is unlikely to have 93 gm of sugar.

- Important data is missing from the label. “% of daily value” of sugar per serving is required and is entirely missing.

These types of data errors could damage a brand’s reputation and lead to legal or financial issues. In the same way, businesses need accurate and consistent data to maintain trust with customers and partners.

Why DataBuck is Your Best Solution for Data Trust?

To automate and enhance your data quality management, consider DataBuck, an autonomous data validation tool that generates objective trust scores for your data. It automates over 70% of traditional data monitoring tasks and uses machine learning to create new data quality rules in real-time.

With DataBuck, organizations can reduce data rework by 90% and improve analytical model accuracy by 70%. It’s an essential tool for maintaining trust in your data pipeline.

Turn to DataBuck for Reliable Data Validation

To ensure robust data testing and observability, rely on DataBuck. Its machine-learning-powered data quality management solution automates the validation process, ensuring your organization has access to accurate, trustworthy data.

Contact FirstEigen today for a free trial and start building trust in your data.

Check out these articles on Data Trustability, Observability & Data Quality Management-

FAQs

Building trust in data is crucial because it ensures the accuracy, reliability, and consistency of the information used for decision-making. Without trustworthy data, businesses risk making poor decisions, losing credibility, and facing financial consequences due to inaccurate insights.

Discover How Fortune 500 Companies Use DataBuck to Cut Data Validation Costs by 50%

Recent Posts

Why Data Trust Is the Real Foundation of AI Success

Enterprises are racing to adopt AI—LLMs, copilots, and autonomous agents that can trigger actions across systems. But as AI moves…

AI-Powered Data Quality Validation for Smarter AML Detection

Fraud, Anti-Money Laundering (AML) and counter-terrorist financing (CTF) programs are only as good as the data they consume. Advanced monitoring…

Managing Tariff Implications Through Data Integrity in Global Supply Chains

In today's global marketplace, supply chains span continents. From consumer electronics to industrial machinery, companies rely on global sourcing and...

Get Started!